注意(请根据自己的情况调整删除或增加ua信息,我提供的规则中包含了不常用的蜘蛛ua,几乎用不着,若您的网站比较特殊,需要不同的蜘蛛爬取,建议仔细分析规则,将指定ua删除即可)

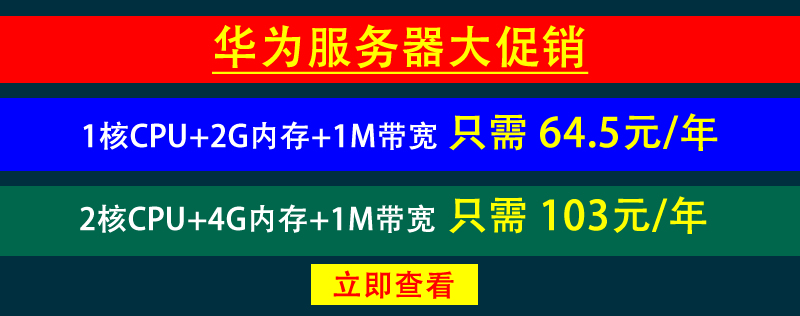

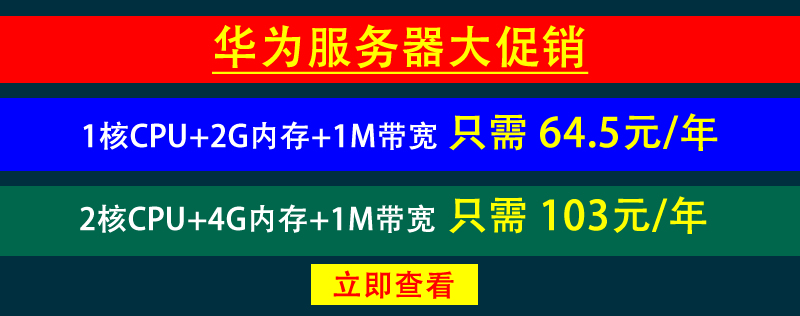

屏蔽后的效果

1、我网站是nginx,下面是我的屏蔽规则,将规则添加到配置文件的server段里面,当这些蜘蛛来抓取时会返回444;

2、IIS7/IIS8/IIS10及以上web服务请在网站根目录下创建web.config文件,并写入如下代码即可;

if ($http_user_agent ~ "DotBot|MegaIndex|MegaIndex.ru|BLEXBot|Qwantify|qwantify|semrush|Semrush|serpstatbot|hubspot|python|Bytespider|Go-http-client|Java|PhantomJS|SemrushBot|Scrapy|Webdup|AcoonBot|AhrefsBot|Ezooms|EdisterBot|EC2LinkFinder|jikespider|Purebot|MJ12bot|WangIDSpider|WBSearchBot|Wotbox|xbfMozilla|Yottaa|YandexBot|Jorgee|SWEBot|spbot|TurnitinBot-Agent|mail.RU|perl|Python|Wget|Xenu|ZmEu|^$" )

{

return 444;

}

3、IIS6请在isapi重写组件中添加规则

<?xml version="1.0" encoding="UTF-8"?>

<configuration>

<system.webServer>

<rewrite>

<rules>

<rule name="Block spider">

<match url="(^robots.txt$)" ignoreCase="false" negate="true" />

<conditions>

<add input="{HTTP_USER_AGENT}" pattern="DotBot|MegaIndex|MegaIndex.ru|BLEXBot|Qwantify|qwantify|semrush|Semrush|serpstatbot|hubspot|python|Bytespider|Go-http-client|Java|PhantomJS|SemrushBot|Scrapy|Webdup|AcoonBot|AhrefsBot|Ezooms|EdisterBot|EC2LinkFinder|jikespider|Purebot|MJ12bot|WangIDSpider|WBSearchBot|Wotbox|xbfMozilla|Yottaa|YandexBot|Jorgee|SWEBot|spbot|TurnitinBot-Agent|mail.RU|perl|Python|Wget|Xenu|ZmEu|^$"

ignoreCase="true" />

</conditions>

<action type="AbortRequest" />

</rule>

</rules>

</rewrite>

</system.webServer>

</configuration>

4、apache请在.htaccess文件中添加如下规则即可:

#Block spider

RewriteCond %{HTTP_USER_AGENT} (DotBot|MegaIndex|MegaIndex.ru|BLEXBot|Qwantify|qwantify|semrush|Semrush|serpstatbot|hubspot|python|Bytespider|Go-http-client|Java|PhantomJS|SemrushBot|Scrapy|Webdup|AcoonBot|AhrefsBot|Ezooms|EdisterBot|EC2LinkFinder|jikespider|Purebot|MJ12bot|WangIDSpider|WBSearchBot|Wotbox|xbfMozilla|Yottaa|YandexBot|Jorgee|SWEBot|spbot|TurnitinBot-Agent|mail.RU|perl|Python|Wget|Xenu|ZmEu|^$) [NC]

RewriteRule !(^/robots.txt$) - [F]

<IfModule mod_rewrite.c>

RewriteEngine On

#Block spider

RewriteCond %{HTTP_USER_AGENT} "DotBot|MegaIndex|MegaIndex.ru|BLEXBot|Qwantify|qwantify|semrush|Semrush|serpstatbot|hubspot|python|Bytespider|Go-http-client|Java|PhantomJS|SemrushBot|Scrapy|Webdup|AcoonBot|AhrefsBot|Ezooms|EdisterBot|EC2LinkFinder|jikespider|Purebot|MJ12bot|WangIDSpider|WBSearchBot|Wotbox|xbfMozilla|Yottaa|YandexBot|Jorgee|SWEBot|spbot|TurnitinBot-Agent|mail.RU|perl|Python|Wget|Xenu|ZmEu|^$" [NC]

RewriteRule !(^robots\.txt$) - [F]

</IfModule>

本文由技术小学生原创,转载请注明出处:https://blog.tag.gg/showinfo-36-35757-0.html 否则追究法律责任。

文章评论 本文章有个评论